Experience Research is an indispensable tool for UX practitioners to understand user behavior. We at Net Solutions have always been deeply interested to understand all facets of human experience and how it applies to the design, build, growth of digital products and services.

Recently, we had an interesting conversation with Meena Kothandaraman, co-founder of the experience research consultancy firm, twig+fish, for insights on the methods, tools, and techniques used by user experience researchers, and what does it take to get a deeper understanding of user behavior.

With over 25 years of experience as an Experience Strategist, Meena teaches the Human Factors and Information Design graduate program at Bentley University, Boston, and has a deep understanding of all things related to experience research. Here we present excerpts from the interview:

Tell us about yourself? How did you start twig+fish?

twig+fish research practice is a two-person qualitative research practice. I am a qualitative researcher who has always been fascinated by the human story. I’m curious about people in general, and how human-centric designs are created. As a researcher, I am interested in learning about more than just people’s interactions with products and services: I want to learn about who they are and observe evidence that shows their behavioral patterns and beyond. This truly provides more insight into how to design meaningful products.

I have always been fascinated by how the human story relates to an organization and its offerings. We use the word “offerings” as it represents a product, a service, interface or space. With any of these “offerings”, our goal is to understand the person, and their perception and interaction with the offerings themselves.

I have always been fascinated by how the human story relates to an organization and its offerings.

I consulted with different companies for about 10 years before going independent in 2000. At that time, I wanted to focus on having a family, but also maintaining a nice work-life balance. Work-life balance has been very, very important to me. I feel like it’s also affected my perspective of how I do my work because it’s about keeping balance in everything: understanding how we can take care of an organization while not losing sight of the various people who create and consume the offerings. As we started twig+fish, our focus fell on who these people are, and what they are aspiring to do both internally and externally to an organization.

I am also a Lecturer at Bentley University, in Boston. I’ve been in the graduate program for 17 years. I teach a course on qualitative research, and how it is positioned and elevated in organizations (of course!). It’s great to be able to discuss and hear from students who are often quite skilled. I thoroughly enjoy that as well. I’ve helped build that program and expanded it to the West Coast, in California. I also guest lecture in MIT’s Undergraduate Mechanical Engineering program, to help engineers learn to focus on people first as they build products. Always so much fun! That’s a little bit of my background.

That’s Bentley University Human Factors and Information Design Graduate program

Yes indeed! It’s now the top program in the world which is very exciting because as I understand it, the university is probably not so well-known as some other universities in Boston. It’s very exciting that we have a presence not only in Boston but also in San Francisco as well. I have had the pleasure of lecturing in other universities as well across the country, but the Bentley experience has been especially wonderful.

That’s great! So, you started off from Canada, just looking at your profile on the website, you have an MS in Information Resource Management from Syracuse University. So, do you miss Canada?

I do. Especially because of the current president (laughs) – but – no politics! I definitely miss Canada, my parents are still there, and the older sibling is there, and my second sibling is in the US with me. I try to get back to Canada fairly often. I participate as often as possible in Canadian conferences. Net Solutions, if you are interested, there is a really good conference in my hometown, Ottawa. It is called CanUX (pronounced “canucks”)

Yes, yes I have heard of it. Saw it on Twitter.

Yeah! It’s awesome! Please put that on your radar. It’s in the October end-November beginning timeframe. It’s a fabulous conference and really well organized. And, it’s the BEST city to visit. (No bias, haha!)

Right. So, I saw somewhere that you have visited India 25 times and maybe since then, you have visited India many more times. So, do you have any family here as well?

I do. Actually, if you can believe, I made my 49th trip to India last year. I have come so many times. My mom is from Jaipur and my Dad is from Kottayam in Kerala. I got a chance to enjoy all aspects of India thanks to my parents. I speak two Indian languages and absolutely love coming back. It was important for my parents for us to appreciate Indian culture growing up in Canada. I am also an Indian classical vocalist and violinist – absolutely love the music! My husband is originally from Coimbatore. Thanks to him, it’s been wonderful to be able to come back as many times as I have. I feel quite fortunate.

That’s really an interesting background. You mentioned about qualitative research. There’s a word that we used to hear quite often: “Ethnography”. So, what does that mean to you?

Ethnography is really trying to understand the context within which people are functioning. When we talk about human behavior, we describe it through Behaviors (what people do), Aptitudes (what people can do, what they know), Attitudes (what people think), and Emotions (what people feel).

Ethnographic research allows us to embed ourselves in somebody’s reality and really uncover all these perspectives, with the hopes of understanding how a person processes information in their own context. It’s about getting a very rich understanding of how these perspectives play together.

When we talk about human behavior, we describe it through Behaviors (what people do), Aptitudes (what people can do, what they know), Attitudes (what people think), Emotions (what people feel).

When you start a research project, how do you scope the work?

That is an excellent question. As I had mentioned, twig+fish is a two-person research practice. My colleague Zarla Ludin and I are constantly discussing how research scope is defined. Traditionally, projects have been scoped through some sort of mandate or RFP – this means stakeholders telling us what needs to be done, what method must be used, what deliverables must be produced and in what timeframe and budget. What we have realized is that many projects are scoped in error. In those situations, research often delivers output that doesn’t really address the real questions needing answers. What results is a research that is rendered useless, and overall, a lack of good perception of research as a strategic tool.

Our goal is to position research as a strategic tool.

As part of the work we do, we are changing the way projects are scoped. Our goal is to position research as a strategic tool. We focus on documenting unknowns from stakeholders in a session that runs about 1-2 hours. We are able to plot stakeholder questions against a framework we created that helps uncover the spirit or intent of the question, as well as the intended output of the question. The conversation that is generated from this quick discussion often reveals the actual questions that need answering, as well as a valuable discussion that helps non-researchers understand credible ways of getting meaningful answers. With this approach, research is scoped appropriately and the output is something that can be applied forward meaningfully.

We run this alignment session as our first phase (of five phases) for all our projects. Using the framework, we are able to scope the client work right in front of them. This always results in more meaningful conversations. Net Solutions you might have remembered the five phases from my presentation in Hungary.

The goal is to bring more communication between researchers and non-researchers. Externalizing the details of the researcher’s mind as they receive questions, and make considerations for a study promotes a collaboration between non-researchers and researchers to craft better studies overall.

Is the type of project also a consideration? Sometimes a company wants to launch a new product, and sometimes whatever they have with them is not working and they want to make improvements. So, does your research methodology change based on the type of project which you’re undertaking?

This is a great follow-up question to what I was speaking to when I used the words “spirit” and “intent”. Whether we like to acknowledge it or not – when embarking on a research study, stakeholders and non-researchers, in general, have some sort of “agenda”. Though this word might have a negative connotation – it is meant to really identify what the stakeholder hopes to achieve when obtaining research results. In launching a new product – what is the mindset and expectation of the stakeholder? I might be very different from a stakeholder making minute improvements to an existing version of their product.

Often, launching a new product has to start with a deeper and more holistic understanding of people, and how this “new product” might fit into their world. That means researchers will study a landscape of people (possibly users and non-users) that help inspire the direction of the new product. Conversely – when making improvements to a product, the focus is really more on the product, and whether the person using it can comprehend those changes (ie. how well are the changes presented, and do they make sense). Methodologies will vary based on the lens used to study the people, vs. their interaction with a product.

Often, launching a new product has to start with a deeper and more holistic understanding of people, and how this “new product” might fit into their world.

Research methodologies need to be selected based on the questions that are being answered. If the questions are vastly different, and we apply the same methodology over and over, that is like using a hammer as a tool regardless of the type of hardware we have in our hand. For this reason, we place so much emphasis on understanding and documenting the actual research question.

When you are into a project and you know what the questions are, and the next stage is to start. How do you actually start because the challenge is to talk to the right set of people? How do you do it?

Another great question. Once we have established the questions to be answered, and the overall scope of the research, the next major task is to figure out who to approach to answer the questions (commonly termed the “sample” in a research study). An unimpressive trend is that most companies go straight to their user population for everything. It is like using the same hammer analogy as I used before. Asking the same users all sorts of questions very rarely yields a horizon of answers. The answers tend to be repetitive and limiting. We also have to identify the sample based on the questions that need to be answered. If the spirit of the question is tactical (“let’s make improvements to the website”), then it makes sense to just approach the existing users of a website. If we are coming up with a new product, however, we have to think about people in analogous spaces, perhaps competitive users, or people who even shun or exploit the space the product lives within to gain inspiration for this new product. We need a way to be less limiting and more discovery-oriented.

In terms of the “right set of people,” as you put it, this is another conversation we have in our Phase 1 Align session, where we uncover who those “right people” might be. The more variables that are attached to defining the sample, the more complex the recruit. The more complex the recruit, sometimes, the higher the cost. These are all things we must be transparent about when discussing research study designs with stakeholders. As my colleague Zarla loves to say, part of our goal as researchers is to make non-researchers “more sophisticated consumers of research.” Understanding who we study is a big part of that. It is not as easy as just going to the same five people repeatedly!

Right. Some of our customers are from a marketing background where sample sizes are very important and you tell them that “look, we are going to do the research with 5-6 users only for this particular project”, they always ask us – is that an adequate size?

The 5-6 people has been a number that has been misinterpreted in various situations. That number is meant to indicate “per group” or “bucket” of people. When recruiting, there are the criteria we use to apply that helps us understand how many people to recruit. Recruiting criteria often gets broken into behaviors, abilities, demographics, and psychographics. For example, if we talked about the MakeMyTrip website and incremental changes to it, what variables would we need to consider?

Behavior – People who book online

Ability – People who know how to price compare between airlines online

Demographics – People aged 21 – 45

Psychographics – People who believe better price options are possible with online booking

If we went with 5-6 people who match these criteria, that would be fine. The more granularity we introduce (for example, we break behaviors into two categories: people who book online into those who booked online within the past 2 weeks, and those who booked online in the past 2 days). This division creates two buckets of people, that introduces another set of 5-6 people. The recruiting criteria definition needs to be structured in a way that shows credibility for the number of study participants, and rigor in why it should be 5, or greater than 5.

The 5-6 people were also introduced purely for validation research, where there might be less need for varied criteria when recruiting (since it is more about the product interaction than the person themselves). Indeed, during validation research, after 5-6 people, there are diminishing returns in what is learned. This does not hold true for ethnographic methods as mentioned earlier, where the “5-6” might be different based on how the recruiting variables are defined.

To answer your question – 5-6 is an adequate size if there are fewer variables with singular criteria. But that isn’t always the case. It depends on the complexity of the research question as well!

Just one follow up question. Many times customers say that we have a website, let’s say it’s a pizza company, and they sell pizza online and they want to improve their conversion rates because they say so many people are coming on the website, but still our conversion rate is low. And, then we suggest to them that it’ll be good if they start with some kind of usability testing. But to begin with, we also recommend doing a survey to get an NPS score. So, do you think a survey could help here?

Yes, surveys can help, but truthfully, they offer very limited information that can be hard to act on or even misinterpreted. Surveys are a great tool to provide numbers, however, we do not get insight into the rationale, or the “why” people might be abandoning a site and lowering conversion rates. Starting with validation research, or usability testing is an option, and often can provide a baseline. But it depends again on the type of questions asked: if we are looking strictly at product interaction and whether a feature has been designed well or not, usability testing is great. If we are trying to understand why people are not buying a pizza online, usability testing will only give us part of the answer. We need to have answers that provide us with people’s expectations of buying online in general, and furthermore, buying their meals online. This will help give more color and detail to their interaction with the website. As we repeatedly believe, people are defined by much greater than a product interaction.

Surveys are a great tool to provide numbers, however, we do not get insight into the rationale, or the “why” people might be abandoning a site and lowering conversion rates.

Surveys, additionally, do not represent how people want to articulate their feedback. Most people “x” out on a survey, or simply put in quick answers to get rid of it. Are we to base changes on that output alone? To me, I would say again, that offers some information, but not the whole information to address the conversion questions.

What might be more valuable than a survey in your scenario is an unmoderated approach? As soon as customers come to the website, they can be intercepted with a message like, “Hi! We are doing a study and would like to record your session to learn about how to make your pizza ordering process better. We will give you Rs. 50 off on your pizza, and might have a few questions to ask you after.” Using this approach, you are likely to get more details, and the ability to record customer thoughts that can be acted on.

Very interesting, because we rely a great deal on remote research using online tools. We record what the user is doing on the website and maybe have a follow-up interview with them. So, do you think remote research is as effective as ‘being there’ and observing as they are doing things?

That is a question we get all the time and again, it depends on what the research question is. When the context does not contribute to answering the research question, we very often are comfortable in offering up remote research as a viable option. There are limitations to the type of data that can be obtained, but many times these limitations might not affect the research questions at all.

In the pizza example, the unmoderated approach could be done through a remote viewing, and pivot into a moderated approach with a researcher watching the user as they order their pizza. There can be an interaction that easily shows shortcomings of the design. If instead, the remote approach uncovers that there needs to be a greater understanding of “why people order food online?” – this question might be harder to answer through remote research. We might instead want to understand people’s realities, their contexts in their homes, their days after school or work, and why they choose to order online, and therefore, what would make it easier for them. This would require in-context work.

Talking about the actual method you use, in-depth interviews is one. Do you also shadow the users as they are going about their business? What are the different methods that you use?

When we discuss methods with stakeholders, we realize there is a lot of confusion, assumption, and interpretation of the typical methods. We speak of methods by isolating our sample, context and dynamic. Who is your sample (representative users, extreme users, etc.)? What is your context (in-context, out of context, longitudinal)? What is your dynamic (one-on-one, group, dyad, etc.)? We can couple this information with how people might communicate comfortably to articulate their thoughts via spoken word, the written word, visuals, acting, etc. This allows for many combinations of “methods” that can meaningfully be designed to fit a study. This also avoids our “running a focus group”, and then having a stakeholder review our work and realize our understanding of focus groups are entirely different from one another. Again, we stress the importance of being transparent in our approach to research study design. In general, our sessions are filled with activities, to match the way people currently communicate.

What kind of activities do you organize?

If you give me one second, here I pinged a site link. It’s really a fabulous website to look at different tools. I can share an example of an activity we did with an insurance company. We decided the sample was focused on prospective customers, the context was to go to people’s homes, and the dynamic was individual/one-on-one sessions. Our goal was to learn about how people defined valuables, and how they categorized valuables in their home. A logical and common approach would be to ask the question directly of people “How do you define what is valuable to you?” Though not wrong, it is a tough question to answer, and for a participant to unravel their thoughts in a few minutes. Additionally, with defining value, there are emotions at play. As a researcher, we are interested in getting at those emotions as well. Our approach was to give them a quick exercise: “Your home is on fire, and you have to run quickly and tag whatever you feel is valuable to you in your home. Here are Post-it Notes, you have 3 minutes. GO!” We had people do the exercise, and when they brought back the list, we asked for their rationale. Answers vary: “I don’t really know, but my mother-in-law gave this to me when I got married and I really liked this. And, my mother-in-law has moved on. So, this is actually valuable to me.” or “Oh! This is a picture that my child drew in kindergarten showing how much they love mum, so I never want to lose it because it reminds me of that particular moment.” As a researcher, we want the participant to deconstruct that moment, and share as much as they can. By giving them activities – we are able to see that there are scales that come through on emotional attachment, monetary values, etc. The use of an activity gives so much more basis for discussion with participants. Participants are often very engaged in our sessions, which creates a basis for trust and conversation to grow.

That’s why you’re testing the process and that kind of answers that might work. I think that’s the way you get inside.

You got it. So often, we expect participants to share their one nugget with us succinctly in a 5-minute question. People do not communicate that way – we must appreciate how people naturally communicate in order to learn from them. Our research protocols and activities must reflect how people share their thoughts. Human data is often messy, complex and quite abstract. Humans communicate with stories. That “messiness” requires understanding and is often what organizations don’t have the time to focus on. Without it, we lose the human from the product equation, and often, the product suffers overall.

As part of our five-phased process, we encourage the stakeholders to partake of the research, hear the stories first-hand, and socialize them internally. When designers can anchor their offerings in a human story, everyone benefits, and most importantly, the designers themselves feel their output to be more meaningful.

Ok. I remember in one of your presentations in Budapest, you showed some graphics. Can you please tell about that.

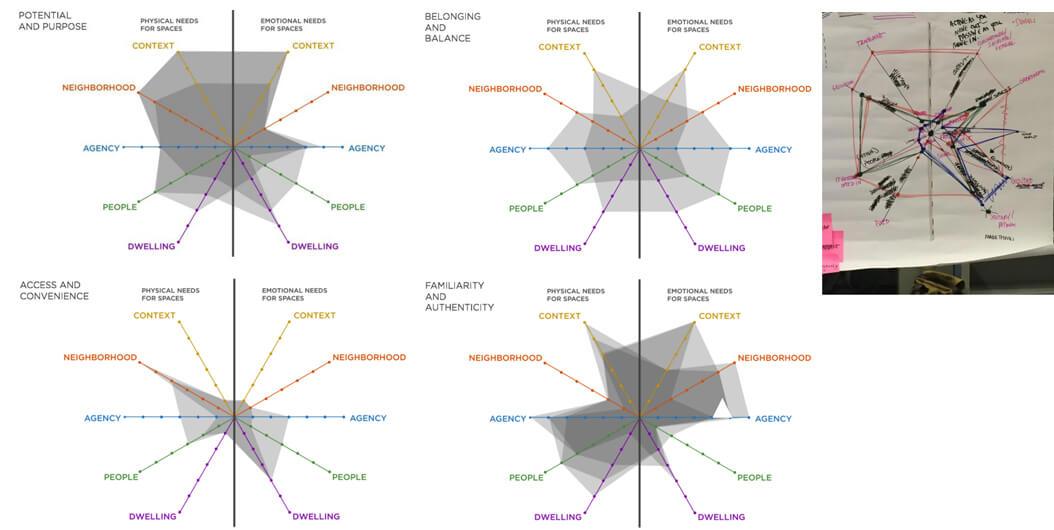

Absolutely – with obtaining human data from people, we also have to have frameworks to organize the abstract data. There are many different basic ways to represent and share human data. It is not always about ROI or measurement. Measurement is a good thing, but if you don’t understand what you are dealing with, measurement is absolutely useless. So, you have to first understand what drives people and unfortunately, it involves emotions. Emotions are not something that can be easily defined or quantified, so we come up with frameworks. Many frameworks that are successful and can be reused exist, and we tap into them whenever we can. I showed a spider diagram in Budapest when I was talking about discussing how people defined factors that contributed to their choosing their home. A 2×2 is an easy framework to understand, but what happens when you have more than 2 vectors? Possibly 6 or 8? It turns into a spider diagram.

To bring further meaning to it, we divided the diagram into two sides, representing the physical attributes and the emotional attributes of selecting a home. For each participant we met, we were able to hear their stories, and plot their spider diagram. Each diagram takes a shape; if we have many participants with the same shape, we notice patterns in the data. In essence, the diagrams helped create patterns to the stories we heard about important attributes in choosing a home in the City of Boston.

There are many different basic ways to represent and share human data. It is not always about ROI or measurement.

In terms of tools, are there any tools you found while correlating the result of your research?

Most of the tools we use are organically created. When we do research, we devise a note-taking strategy.

For different types of projects, that strategy will be different. Recording observations during ethnographic research are different than recording notes during a moderated usability test. We conduct debriefs with our observers using Post-It Notes to help expedite and remember details for analysis of the data. When conducting remote research, there are plenty of tools we use. Depending on the type of study, automated tools help streamline testing, diary studies, and the recording of interviews in other languages. We have a list that we tend to refer to based on our success rate with them.

Are there any digital tools specifically you find for capturing data?

We have used a variety of tools, such as Mural, and even Pinterest! Additionally, we collect diary studies using dscout and Qualvu and have had great success with them. When we are low-budget, we use Google tools all the time.

And, my last question is, which is your favorite part of research and which part do you find the most challenging?

A tough question! I love doing the research: I have been doing this for 27 years and would not do anything else. The research itself has so many aspects to consider, and is not just the act of asking a question! I am fascinated by the placement and positioning of research in an organization. I love explaining to people what to do, and how to elevate research as a strategic tool in an organization. Given our background of teaching and consulting, we always coach as we consult, so that people can really benefit from more credible practices. So often, clients will see us employ our five-phased process and ask us to come back and teach the process itself. It is so rewarding!

In terms of the various phases and tasks that I most enjoy, I love the alignment phase, as well as the planning and definition that is needed to design the protocol: being able to provide people with a way to share their thoughts is so much fun. It takes its own level of creativity and organization of thought. I love when participants feel a level of engagement with the study because if they are having fun, they tend to be more relaxed. When they are more relaxed, they share more. I love doing learning about people, and moderating sessions to hear their stories.

Shepherding stakeholders through the process is also something I truly enjoy. I marvel at my colleague Zarla’s abilities to effectively analyze human data and find I have learned so much from her in terms of various frameworks to apply. I find the aspect of assembling the patterns into frameworks most challenging, but also, most rewarding. Helping non-researchers understand how to approach human data can be difficult, but so many opportunities arise that are anchored in the human story that stakeholders can then benefit from.

Very useful information. Thank you so much, Meena and it’s good that you’ll be here in Bengaluru for the UX India Conference. And, we’ll be a part of your workshop. And, I’m sure that it’ll be interesting there.

Thanks, and have a wonderful evening! Have a great weekend.

Footnote:

Abhay Vohra is the Program Manager for Digital Experience at Net Solutions. He met Meena Kothandaraman at a UX Conference in Budapest, Hungary on Oct 17.