Who was the IBM’s Black Team? Here’s a quick tale on the evolution of effective software testing.

Back in the 1960s, buggy software was considered defective software. Because of the lack of a standard software testing process, IBM was losing a lot of money. This was when IBM realized that there were around 10-20% efficient software testers in the team — people who had a passion for finding bugs in the software product. IBM put these like-minded testers on a single team and hoped that they would ramp up the bugs finding process by at least 20%.

This proved beneficial for IBM as software quality improved at a tremendous rate. The new team was twice as effective compared to their peers and was proud of the new role. The software testers enjoyed their work so much that they considered themselves villainous destroyers (whom the programmers feared) and even started to show up to work dressed in black. Hence came to light IBM’s Black Team.

Since then, software testing has evolved as a process in many ways. This art of identifying bugs in the software product is entrenched deeply in the software development process.

Global Software Testing Services market size is estimated to grow at a CAGR of 12% with USD 34.49 billion during the forecast period 2021-2025.

— MarketWatch

Now let’s move on to find out what is software testing and the related concepts in detail.

What is Software Testing?

Goal: Follow the best techniques and leverage the best tools to identify and fix the existing bugs in the software

Software testing is the process of identifying and reporting bugs in the software product under development. It helps in quality assurance and validation that the software is functional and running.

The goals of software testing are to ensure that the software:

- Meets the business and functional requirements

- Provides desired output for all input types

- Responds quickly to user’s input

- Works optimally in all kinds of environments

- Meets stakeholders’ expectations

- Is ready to be shared with the respective stakeholders

Quality Assurance vs Quality Control vs Software Testing

Confusion between quality assurance, quality control, and software testing is common. Although all three serve the primary objective, i.e., ensure that the software fulfills the defined quality standards, the goal of each of them varies.

Here’s the difference between software testing, software quality assurance, and quality control:

1. Quality Assurance:

Focuses on the prevention of bugs from occurring. The quality assurance team ensures that all the quality standards and practices are in place that helps maintain the software quality across the development cycle.

2. Quality Control:

Focuses on validating the adherence to the recorded software requirements. The quality control team helps validate that the developed software meets the technical software requirements.

3. Software Testing:

Focuses on identifying and fixing existing bugs. The software testing team is responsible for identifying the existing bugs in the software and reporting it back to the developers.

Here’s a comparison table that differentiates QA, QC, and testing:

| Quality Assurance | Quality Control | Software Testing |

|---|---|---|

| 1. Is part of STLC (Software Test Life Cycle) | Is categorized as a branch of quality assurance | Is categorized as a branch of quality control |

| 2. Focuses on the prevention of bugs from occurring | Focuses on validating whether the developed software meets the recorded functional and business requirements | Focuses on identification and reporting of existing bugs in the software |

| 3. Process-oriented | Product-oriented | Product-oriented |

| 4. Preventive activities | Corrective activities | Preventive activities |

| 5. Involved: The software development team and the stakeholders | Involved: The software development team | Involved: Testers and developers |

What are the Attributes of Quality Software?

Software quality attributes help in understanding — what defines quality in software. It significantly helps the development team understand the aspects they should be focusing on when concerned about quality delivery.

The most noteworthy attributes of good software include:

- Efficiency

Ensures that the amount of computing resources and code is in place to perform the intended action

- Reliability

Ensures that the software performs the intended action without bugs and delays

- Usability

Ensures that minimal effort is required to perform the intended action, i.e., the interface is easy to use and operate

- Survivability Ensures

That the critical software functions can be performed efficiently even when a part of the system is facing an issue

- Integrity

Ensures that the access to the software and data is controlled, i.e., only the authorized personnel can gain access to the software

- Correctness

Ensures that all the software requirements are met and fulfills the clients’ needs

- Flexibility

Ensures that minimal effort is required to fix an error and modify it in the long run

- Maintainability

Ensures that minimal effort is required to identify and fix the errors after the software launch

- Portability

Ensures that minimal effort is required to migrate and use the software in another environment

- Expandability

Ensures that minimal effort is required to add/remove functionalities or to enhance the existing ones

- Interoperability

Ensures easy and seamless software integration with another existing software without affecting its functionality

Manual Testing vs Automated Testing

The entire software testing process is a combination of both manual and automated tests (consider this the best approach forward). It is up to the assurance and testing team as to what testing they prefer at what stages of the development process.

In manual software testing, the testers perform the test cases without any assistance from available tools and software.

In automated testing, the test cases are executed automatically without any human involvement using different tools and software.

For a more detailed comparison, refer to the table below:

| Manual Testing | Automated Testing |

|---|---|

| 1. Performed by quality assurance and testing team | Performed using software testing tools |

| 2. Suitable for exploratory, usability, and ad hoc testing | Suitable for white box testing, load testing, and performance testing |

| 3. Should be performed when experienced and talented quality assurance and testing experts are available | Any test that needs to be repeated occasionally during the development process should be automated |

| 4. Is less reliable as it is prone to human errors | Is fast and reliable as it runs using tools and pre-written test scripts |

Principles of Software Testing

There are seven software testing principles, including:

- Testing aims to identify the bugs in the incremental version of the software

- Testers should avoid exhaustive testing (where all the use case scenarios are used for testing)

- Early testing should be given high preference

- Focus on identifying the modules with defect clustering, i.e., the modules with the most number of defects

- Take “pesticide paradox” into consideration, i.e., do not rely on the same tests repeatedly as it can get taxing to find new bugs with time

- Testing should be “context dependent,” i.e., create different software testing strategies for different software. Every software is unique, and so the testing team cannot expect to run the same test cases on every software product under development

- Software testing is not only about identifying bugs but also ensuring that the software fulfills its business and the functional requirements

What are the Different Software Testing Approaches?

There are two approaches to software testing, which include:

- Static Testing

It is an implicit type of testing where the software testing team performs inspections and reviews of the code. Static testing focuses on verification.

- Dynamic Testing

When programmed code is run along with the designed test cases, it is known as dynamic testing. Dynamic testing focuses on verification and validation.

- Passive Testing

Passive testing helps verify the system behavior without the involvement of the actual software product. The testers go through the system logs and trace patterns that help in inferring system behavior.

- Exploratory Testing

Exploratory testing is also known as“on the precise moment testing,” as no use cases are defined in advance. The testing team decides on the testing technique based on what they are testing. This technique is based on the testers’ knowledge, experience, and creativity.

- Black-Box Testing

Black-box testing helps in testing the functionality of the software product without any knowledge of its source code.

- White-Box Testing

In this testing approach, the software testing team checks the internal structure of the code, i.,e, its software architecture, instead of checking the working of the software.

- Gray-Box Testing

Gray box testing is a hybrid approach to black-box and white-box testing. The software testing team needs to know the internal data structures and coding to run tests at the black-box level effectively.

What are the Different Software Testing Levels?

Primarily there are three levels of software testing, which include:

- Unit Testing

Unit testing helps in bugs identification in a single module of the code. In Agile development, unit testing implies identifying the bugs in a single user story developed over a time-boxed sprint cycle.

- Integration Testing

Integration testing helps test the integrated version of the software, i.e., when two or more modules are clubbed together. It helps in verifying the wholeness of the system and identification of bugs (if any).

- System Testing

System testing helps test the entire software system when clubbed together. Here, all the modules are integrated to check the entire software for bugs and whether it meets the business and the functional requirements.

What are the Different Types of Software Testing?

There are several software testing types to choose from. Each of these testing techniques has a specific use case they tend to. Let’s have a look at some of them:

- Ad-hoc Testing

It is an unstructured software testing technique that is performed randomly without using any test use cases. This type of testing helps identify bugs that went undetected by the other standard tests.

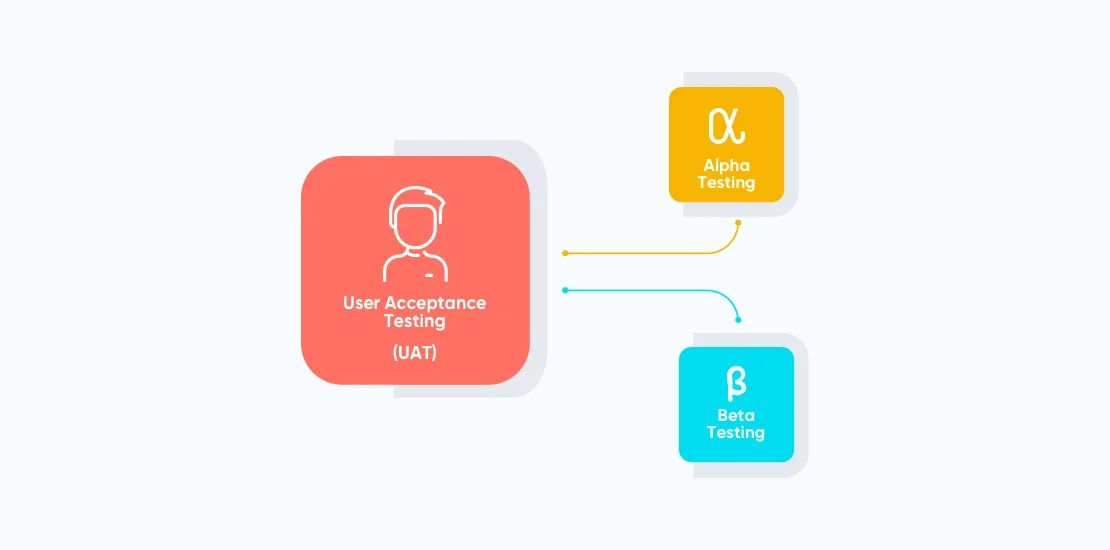

- Acceptance Testing

It is a formal software testing technique that helps validate whether the software meets the user’s needs and business and functional requirements. It is often conducted by the end users and is also known as “User Acceptance Testing.”

- Regression Testing

Regression testing is brought into the picture when a major change is made to the part of the software. The different test cases are re-implemented to check whether the software still performs as expected (after the change).

- Security Testing

It is a type of testing that helps ensure that the software is protected against outside attacks from unauthorized persons.

- Smoke Testing It is an initial software testing that helps identify the basic problems, which may restrict the software from running.

- Monkey Testing

As the name suggests, monkey testing is conducted under the assumption that a monkey is operating the software. Random values are thrown as an input to check the software performance.

- Pair Testing

This is a collaborative software testing technique where two software development team members (two testers, tester-developer, tester-business analyst) work on the same system to test the software. Where one of the team members runs the tests, the other analyzes the results.

- Load Testing

Load testing is a type of test that helps determine the efficiency of software under stress conditions, i.e., if there is an increase in the number of users or when it is handling a large data chunk.

- Continuous Testing

Continuous testing is about running automated tests as part of the software development process. It helps identify the bugs early on in the process, thus lowering the risk of detecting many bugs at once.

- Compatibility Testing

This type of testing helps validate that the software runs fine when operated in different environments.

- Alpha/Beta Testing

Alpha test is conducted by a group of end-users or a testing team group at the developer’s site. It is an internal acceptance testing technique that proves helpful for most off-the-shelf software.

Alpha testing is followed by beta testing. It is an external user acceptance testing technique where limited end users test the software and report faults and bugs.

Software Testing Benefits

The benefits of having a software quality assurance and testing process in place include:

- Minimizes the risk of errors

- Enhances the value proposition of the final product

- Helps promote continuous improvement of the software development process

- Lowers the chances of errors in the later stages of the development process

- Heps meet the client requirements, which, in turn, helps enhance the development team’s credibility

- Makes it easier to iterate through the software when performing the maintenance activities

What are the Different Types of Software Testing Tools?

| Tool Type | What Does it do? |

|---|---|

| Program Monitors | Checks the source code for errors |

| Code Coverage | It helps get an idea about the extent of the source code covered while running a test. The higher the percentage of test coverage, the fewer are the chances of undetected bugs |

| Symbolic Debugging | Is a tool type that helps in inspecting and analyzing the code variables at the point of error |

| Automated Functional GUI Testing | This tool helps in frequent running system-level tests throughout the GUI (Graphical User Interface) |

| Benchmarks | A tool that helps run run-time performance comparisons |

| Performance Analysis | Helps highlight hot spots (a part of the source code where most of the instructions are executed) and resource usage |

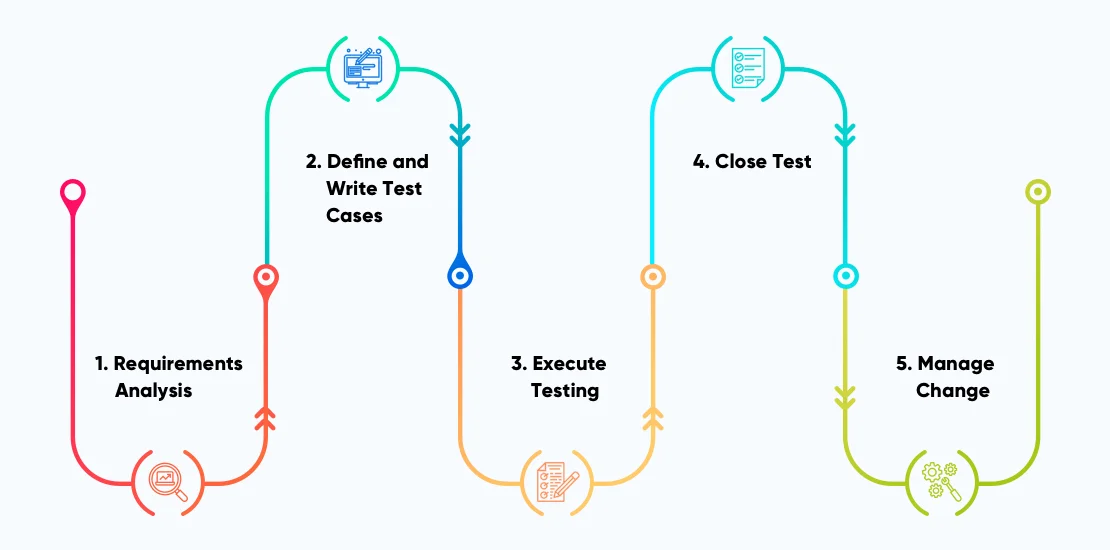

Software Testing Process (from Agile Perspective)

Here’s a five-step process for conducting software testing:

- Requirements Analysis

This is the same requirements analysis process that is conducted at the start of the software development process. The testing team is also an active part of this requirements gathering and analysis process as it helps them create test cases for the features that hold higher priority in the product backlog.Requirements analysis helps testers in the following ways:

- Understanding of the product and its features

- Analysis of functional and non-functional requirements

- The software testing approach that needs to be followed

- Deducing whether manual or automation testing is needed

- Enlisting the tools that will be helpful during the process

- Understanding of the risks, dependencies, and challenges

- Building a test schedule

- Creating approval workflows from early on

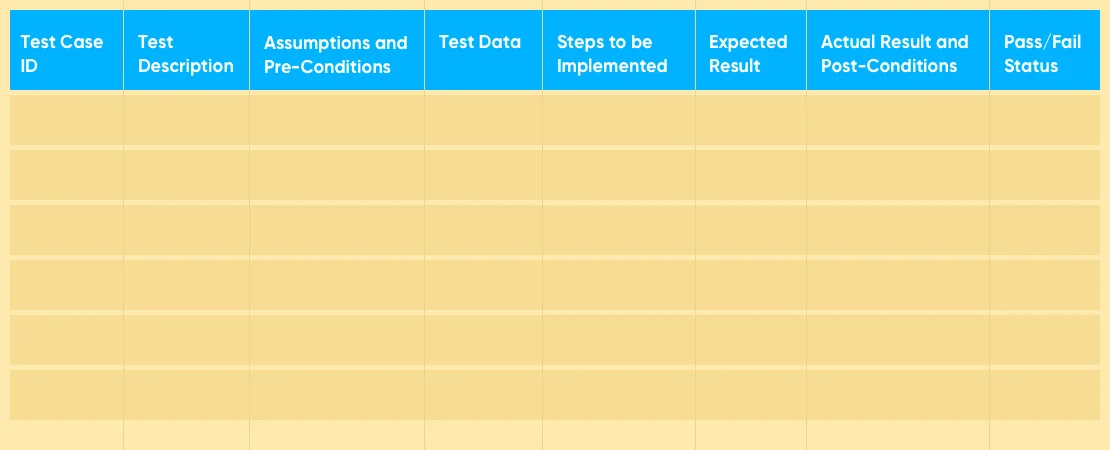

- Define and Write Test Cases

A test case is a document that specifies system inputs, test conditions, test processes, expected outputs, and the steps that need to be completed for considering the test as complete.

Different test cases are written to check and validate different aspects of the software. For instance, there is a separate test case for functionality, usability, and security.When writing test cases, the following parameters are considered:

- Test Case ID: Represents a unique test ID. When naming a test case, consider following a standard naming convention for convenience.

- Test Description: This includes a description of the feature that needs to be tested

- Assumptions and Pre-Conditions: Describes the conditions that need to be met before implementation of the test cases

- Test Data: Includes the permissible variables and values for executing a test case. For example, what are the allowable variables and values when creating a username and password for a customer account?

- Steps to be Implemented: This includes the easy-to-execute steps that a customer follows to perform an intended action.

- Expected Result: Includes the expected test result once the test case has been successfully executed.

- Actual Result and Post-Conditions: This includes the description of the actual result compared to the expected one. On the other hand, the post-condition specifies the action that is performed as a result of some other action.

- Pass/Fail Status: If the expected and the actual result are the same, the test result is equal to pass. On the other hand, if the expected and the actual result are different, the test fails.

- Execute Testing

Tests are executed in Agile sprints. The developers develop a feature over a sprint cycle, then it is passed on to the Agile testing team. The developed features are checked using various software testing techniques, and a test report is created, which is passed to the developers.

This to-and-fro movement continues until all the features are bug-free.

- Close Test

For closing a test, it should meet exit criteria, i.e., what are the conditions (which when met) will mean that the feature is ready to go to the deployment stage.

Some of the primary conditions of the exit criteria include:

- All the business and the functional requirements of the software are met

- At least 90% of test cases should result in “pass”

- All the system critical bugs are fixed, and there are no hindrances in the smooth running of the system

- All the input probabilities are checked and produce the desired output

- Manage Change

When the software is live in the market, continuous improvement should be a priority. The testing team should continue with the continuous testing process and should also be able to produce a detailed report of the bugs reported by the customers.

The other areas where the software testing team needs to be proactive include:

- When a new feature is developed, the testing team should conduct sanity testing to check whether the code changes have not interrupted software’s working

- When code refactoring is implemented, i.e., the internal structure of the code is changed in a way that does not affect its external behavior.

Software Testing: Best Practices

Some of the best practices for conducting quality software testing include:

- Write a lot of small unit tests that too quickly. Move on to write a few more detailed, high-level tests later.

- Practice test-driven development (TDD). It implies that the software requirements are converted into test cases early in the development cycle. TDD helps in tracking the software development efforts continuously against the developed test cases.

- It is recommended not to tie the unit tests too closely to the core part of the code. This is because, most of the time, when the code refactoring is conducted, these unit tests tend to fail

- Avoid running unit tests for non-trivial code. There is no harm with it

- Run automated contract tests near the end of the development cycle. This helps validate that the software implementation sticks to a well-defined contract (from the consumer and the provider perspective)

- Encourage the development team to follow a collaborative approach to development and testing. Cross-functional testing should be deeply entrenched in the development team’s work culture

- Follow the following format when conducting acceptance tests: Given [condition], and [condition 2], when [ explains user action], and [user action 2], then [ expected result]

- Consider running exploratory testing as these help in detecting issues that might have skipped through the build pipeline. These tests are based on the tester’s creativity and freedom and do not follow a standard process

- Write minimum test cases and ensure that there is no test duplication

- Write clean and understandable test code

FAQs Related to Software Testing

1. What is Code Coverage?

Code coverage or test coverage measures the extent to which the source code is covered when test cases are run.

2. What is Shift Left Testing?

Shift left testing is a practice of implementing software testing from early on. The focus of shift left testing is to shift testing tasks to the left early in the software development and delivery lifecycle.

3. What are the Latest Software Testing Trends?

The latest software testing trends include:

- Test Automation

- DevOps and Agile Testing

- AI (Artificial intelligence) for testing

- Regression testing

4. What is Crowdsourced Testing?

Crowdsourced testing is an emerging software testing trend where dispersed and a temporary workforce is deployed for software application testing. It is similar to staff augmentation, where staff is outsourced from across organizations on a project-to-project basis.

5. Test Script vs Test Case?

A test case is a documented testing process that is followed to test a feature or software functionality.

On the other hand, a test script is a set of instructions or a code segment that helps test a feature or software functionality.

6. When to conduct Automated Software Testing?

Automated testing is best suitable for scenarios where testing needs to be conducted repeatedly.

7. What is Fault Seeding in Software Testing?

Fault seeding is a technique used to validate the effectiveness and efficiency of a testing process. In this technique, one or more faults are intentionally added to the code base without letting the testers know. If the tester can identify the seeded faults, one can be assured that the software testing is being effectively conducted.

8. What is Multi-tenancy testing?

Multi-tenancy testing is a software testing approach to identify and fix issues in multi-tenant applications. In the approach, we test an app for several aspects like functionality, infrastructure, and network. The idea is to ensure not even the slightest issue in your app goes unnoticed and it can work smoothly.

Conclusion

If developers are the creators, testers ensure that the creation is bug-free. Software testing is a building block of software that covers a lot of things that are essential for ensuring quality delivery. An organized software testing process instills confidence in the software and helps in enhancing the overall user experience.

In this write-up, we tried to cover everything around software testing, including — what software testing is, its approaches, levels, type, benefits, process, principles, best practices, and FAQs.