Executive Summary

Most software systems don’t fail loudly; they fail in ways your dashboards weren’t built to catch. Fragmented data, disconnected signals, and hours lost to manual triage are the real cost of weak observability. Observability fixes this by letting teams understand system behavior through external signals – logs, metrics, and traces – without needing to know the failure mode in advance.

The result: faster recovery, less downtime, and reliability that can be measured and managed like any other business metric.

This guide covers how to get there, from first principles to a practical Crawl, Walk, Run adoption path using OpenTelemetry.

Key Takeaways

- You will see why observability is a business issue: outages, slow recovery, and repeated incidents all affect revenue, trust, and delivery speed.

- You will get a plain-English definition of observability and a clear difference between observability and monitoring.

- You will understand how logs, metrics, and traces work together, and why correlation across them matters.

- You will learn how SLI, SLO, and SLA fit together, and how error budgets support better reliability decisions.

- You will get a practical Crawl, Walk, Run path for improving observability without trying to do everything at once.

Reliability is no longer purely an engineering concern. When a service degrades, the consequences ripple through revenue, customer trust, contract obligations, and engineering capacity simultaneously. The question facing technology leaders today is not whether to invest in observability, but how to move from reactive fire-fighting to a deliberate, measurable operating discipline.

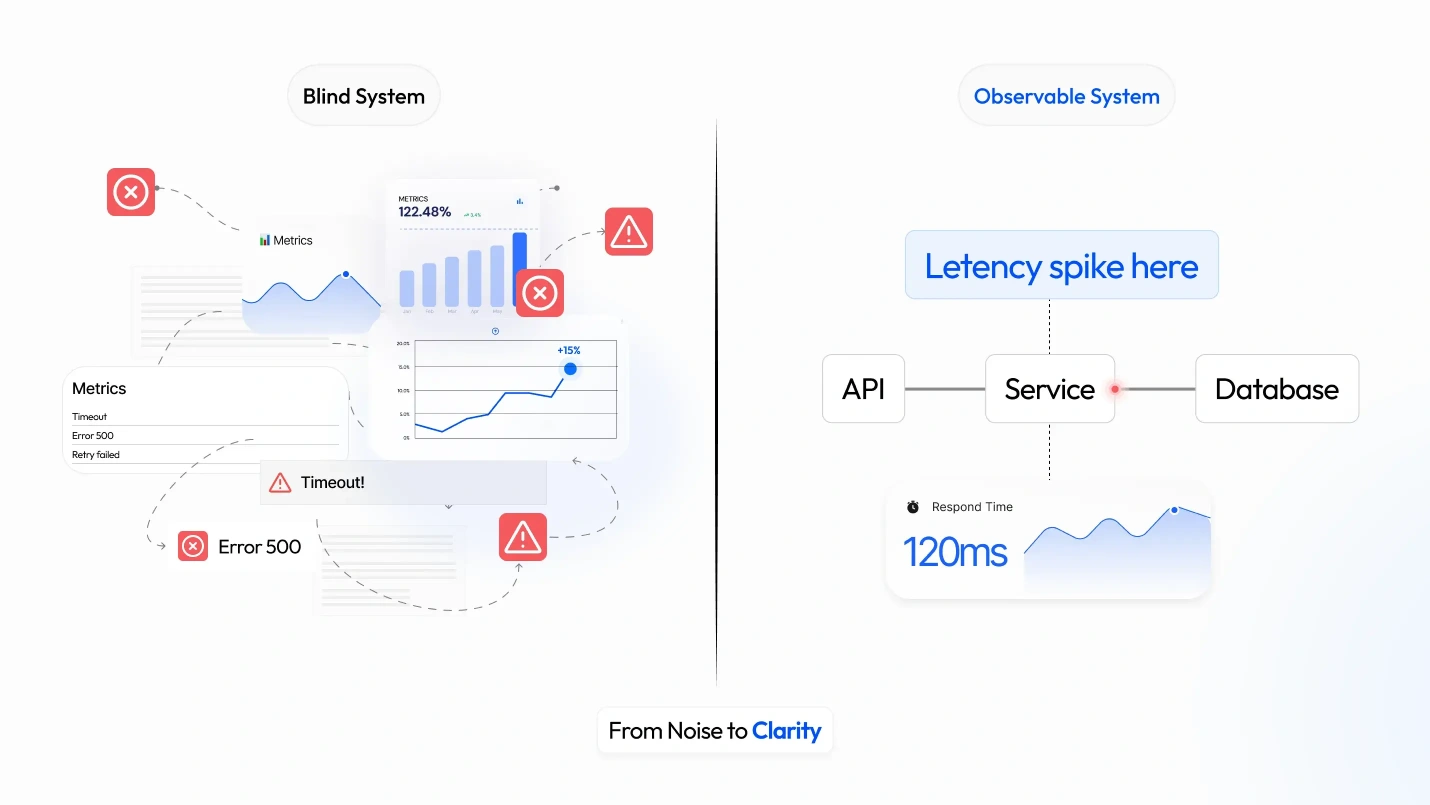

Most organizations already have monitoring in place. Many have dashboards, alerting pipelines, and log aggregation. Yet incident resolution times remain long, post-mortems repeat the same root causes, and engineering teams bear a disproportionate share of the toil burden that crowds out delivery work. The gap is not a lack of data. It is a lack of connected, interpretable data at the moment it matters.

Why did a team with dashboards, logs, and alerts still spend hours finding the root cause?

A real incident often looks like this: the service is slow, dashboards show a spike, logs are noisy, alerts keep firing, and no one can say exactly where the failure started. The team has data, but not enough connected data, so they spend time searching instead of fixing. That costs engineering hours, delays recovery, raises support load, and can damage customer trust. Observability matters because it reduces the gap between “something is wrong” and “we know what to do next.”

Why is visibility into your systems a business problem, not just an engineering one?

It is a business problem because unreliable systems affect revenue, customer satisfaction, compliance risk, and delivery speed. It is also an engineering problem because the same poor visibility leads to alert fatigue, manual triage, repeated checks, and longer recovery times.

From a business perspective, downtime can disrupt sales, customer behavior, and internal operations. It can also make it hard to prove whether a service is reliable enough for a customer or a regulator to rely on. DORA’s reports exist because software delivery performance is a measurable business capability, not just an engineering preference.

From the engineering point of view, missing context creates repeated toil. Teams spend time checking the same services, correlating across separate tools by hand, and waking up people who still do not have enough information to quickly solve the issue. Google’s SRE (Site Reliability Engineering) materials describe toil as manual, repetitive, and tactical work, and warn that organizations should reward root-cause fixes over workarounds that only hide the problem.

The shared cost is simple. When engineering cannot quickly explain and resolve incidents, customers lose confidence, leaders lose predictability, and teams lose morale. The business pays for the recovery time, the loss of focus, and lower trust in the service.

What is observability, and how is it different from monitoring?

Observability is the ability to understand a system from the outside by asking questions about it without already knowing the answer. Monitoring is narrower. It tells you about known conditions you are already watching, while observability helps you investigate new or unexpected failures.

In plain terms, observability means the system emits useful outputs, and those outputs let you infer what is happening inside the system. Those outputs are usually logs, metrics, and traces. Logs are records of events, metrics are measurements over time, and traces show the path of a request through the system.

The three pillars matter most when they are correlated. A metric may show increased latency, a log may show a timeout, and a trace may show that the slowdown started in one dependency. None of those signals is enough on its own in many incidents. Together, they give a more complete view.

What this means for the business is direct: better correlation shortens incident time, reduces customer impact, and lowers the cost of repeated manual diagnosis.

How does observability resolve the three core operational pain points?

Observability solves the pain of incomplete incident data by making signal collection and correlation part of the system design, not an afterthought. When logs, metrics, and traces share context, engineers can move from “something broke” to “this dependency failed at this step for this user path.”

It solves alert fatigue by making alerts more meaningful. SRE guidance says alerting should focus on significant events, especially those that consume a large part of the error budget, rather than every unusual but low-value signal. That reduces noise and makes pages more actionable.

It solves the shared-language problem between business and engineering by tying technical signals to service outcomes. A latency spike is not just a chart; it may mean a failed checkout, an unhappy customer, or a breached commitment. SLO-based thinking turns that into a common conversation about impact and priority.

What this means for the business is that observability makes incident response faster and more predictable. That lowers downtime cost, improves customer experience, and makes reliability easier to manage as a business outcome.

What are leading and lagging indicators, and why does the distinction matter?

Lagging indicators tell you the problem after it has already affected the service. Examples include spikes in error rates, increases in p99 latency, and failed deployments. Leading indicators warn you earlier, before customers fully feel the issue. Examples include queue depth growth, rising memory pressure, and a dependency that is slowly degrading.

The distinction matters because lagging indicators are often sufficient for confirmation but not sufficient for prevention. If the first signal you see is a visible outage, you are already in reactive mode. If you can see queue growth or resource pressure earlier, you have time to reduce load, scale capacity, or roll back safely.

Observability helps teams shift toward leading signals by collecting enough context to spot trends, not just failures. A good observability setup not only answers “what failed?” It also helps answer “what is becoming risky?”

What this means for the business is earlier action and less user impact. You spend less on crisis recovery and more on preventing incidents before they reach customers.

What are SLA, SLO, and SLI, and how do they fit together?

An SLI, or Service Level Indicator, is the measured signal. An SLO, or Service Level Objective, is the internal target for that signal. An SLA, or Service Level Agreement, is the external commitment you make to a customer.

The relationship is literal. The SLI is what you measure, the SLO is the target you set for that measurement, and the SLA is the promise you make outside the company based on that target. Google’s SRE material uses this structure because it creates clarity around reliability, customer expectations, and operational decisions.

Error budgets complete the picture. An error budget is the allowed amount of unreliability left after the SLO target is set. When the budget is healthy, teams can move faster. When the budget is exhausted, teams should slow feature work and focus on reliability. This creates a clear balance between innovation and risk control.

What this means for the business is better control over reliability tradeoffs. Leaders can decide, in measurable terms, when to push new features and when to protect the customer experience.

What is OpenTelemetry, and why is it the foundation of modern observability?

OpenTelemetry is a vendor-neutral, open-source observability framework and specification. It provides teams with a single standard for collecting, processing, and exporting telemetry data, including traces, metrics, and logs.

Its main value is unified instrumentation. Instead of building separate instrumentation for separate backends, teams can instrument once and send data wherever they need it. That reduces lock-in, avoids inconsistent formats, and keeps application code from becoming tied to a specific observability stack.

OpenTelemetry is not just APIs. It also includes SDKs, a Collector, semantic conventions, and context propagation. SDKs let code emit telemetry in a standard way. The Collector receives, processes, and exports telemetry. Semantic conventions standardize names and attributes, making data comparable across services and teams. Context propagation lets the request context move across service boundaries so signals can be correlated.

It has become the practical industry standard because it is widely adopted, backed by the CNCF, and already used in production across many organizations. CNCF lists OpenTelemetry as an incubating project, not a graduated one, but it is clearly mature and widely established.

What this means for the business is portability and lower long-term risk. You can change backends without rebuilding your instrumentation strategy, which protects investment and reduces future migration cost.

Observability adoption: A crawl, walk, run framework for engineering teams

Crawl: Establish baseline visibility

Crawl means establishing baseline visibility. Start by centralizing logs, instrumenting key services with metrics, and defining your first SLIs for the services that matter most.

What you gain is faster triage and less guesswork during incidents. Signs you are ready to move on include clear logs for the main user flows, a small set of trusted service metrics, and an on-call team that can quickly identify where to look.

What this means for the business is reduced recovery time from the first day of the program. That gives leadership a clearer view of service health and engineering a smaller search space during incidents.

Walk: Add distributed tracing and connect signals to business priorities

Walk means adding distributed tracing and correlating the three signal types. Define SLOs and error budgets, then use them to connect reliability decisions to business priorities.

What you gain is faster root-cause analysis and a better shared understanding across teams. Signs you are ready to move on include tracing being available for critical request paths, incident reviews referencing SLOs, and teams making release or rollback decisions based on observable data rather than intuition.

What this means for the business is less time spent on incident calls and more time spent on product work.

Run: Embed observability into daily engineering practice

Run means making observability part of daily engineering work. Use full OpenTelemetry adoption, anomaly detection, continuous SLO feedback loops, and a culture where teams expect observable data in design, testing, deployment, and incident response.

What you gain is proactive detection and a stronger culture of reliability. Signs you are ready to stay at this level are simple: new services are instrumented by default, alerts are driven by service impact, and reliability reviews happen before the incident becomes user-facing.

What this means for the business is a more predictable operating model. Reliability becomes part of how the company builds, not just how it responds.

The sources are the places where behavior begins: application code, runtime infrastructure, and outside services. The instrumentation layer adds standard telemetry at the point where work happens. The three signal lanes carry different kinds of information, but they stay connected through shared context. The backend stores and processes the data, and the consumption layer turns it into human action through dashboards, alerts, and incident response. The Collector is the main handoff point because it can receive, process, and export telemetry in a vendor-neutral way.

Business value: This architecture helps teams find user-facing problems faster, reduce downtime costs, and make reliability visible to leaders in a way they can understand without needing technical detail.

What good observability looks like in practice

Good observability means a team can answer three questions quickly: what is wrong, where it is happening, and why it is happening. They do not need prior knowledge of the exact failure mode to begin the diagnosis.

It also means observability is treated as a standard engineering practice, not a cleanup task after production pain starts. Google’s SRE material shows that good monitoring, alerting, SLOs, and blameless postmortems all support the same goal: better service health and better learning after an incident.

At the culture level, good observability reduces blame and increases clarity. Teams spend less time debating what happened and more time fixing the system. That is what makes it useful over the long term.

Why observability is a core engineering discipline, not a tooling decision

Observability is not a nice extra. It is part of how modern teams keep services reliable while still shipping change. DORA’s work shows that software delivery performance is a measurable organizational capability, and SRE practice shows that reliability work must be tied to SLOs, alerts, toil reduction, and learning from incidents.

If you collect the right signals and make them usable, you reduce incident time, improve customer experience, and create a common language for business and engineering decisions. If you do not, every incident becomes a search problem, and every search problem costs time, money, and trust.

Where to start: Recommended first actions

Crawl

For Business

- Pick one free/low-entry observability vendor and cap scope to the most important 1–3 services.

- Define the first 3–5 SLIs around customer impact, not platform completeness.

- Decide the ingest ceiling you will not cross before moving to the next phase.

For Engineering

- Instrument the chosen services with OpenTelemetry SDK or auto-instrumentation.

- Send data directly to SaaS with source-side log filtering and basic trace sampling.

- Centralize logs first; keep retention and dashboards minimal.

Walk

For Business

- Tie SLOs to business priorities such as availability, checkout latency, or failed-job rate.

- Approve a managed collector budget and a paid SaaS tier only after the volume is visible.

- Set explicit exit criteria for the next phase: volume, team size, compliance, or vendor-cost thresholds.

For Engineering

- Deploy an independent OpenTelemetry Collector with at least two replicas.

- Add distributed tracing across all core services and move sampling decisions to the collector.

- Measure ingest by signal type, then tune sampling, cardinality, and retention before adding more tools.

Run

For Business

- Treat observability as a shared platform with a named owner and a real budget.

- Put showback/chargeback in place, so teams see the cost of their telemetry.

- Use SLO burn, anomalies, and incident trends as operating signals, not just dashboards.

For Engineering

- Move to a self-hosted stack on EKS: Collector, Prometheus/Mimir, Tempo, Grafana, and Alertmanager.

- Engineer storage, compaction, backup, and retention as first-class concerns.

- Automate alert routing, scaling, upgrades, and disaster recovery.

When business and engineering agree on observability, reliability stops being a vague hope and becomes a managed part of the company’s operations. The organizations that treat reliability as a managed discipline today are the ones that will spend less time recovering from incidents tomorrow and more time building what their customers actually need.